Member-only story

Google’s Gemini 2.0 Flash Now Lets You Edit Images In Natural Language

Google’s Gemini 2.0 Flash model now lets you make image edits using natural language natively. Unlike earlier multimodal systems that relied on pairing separate models (such as using a language model together with Imagen 3 for image generation) Gemini 2.0 Flash handles multimodality by generating images directly within the same system that processes text. This eliminates the need for inter-model communication, reducing latency significantly.

Since Gemini 2.0 Flash no longer depends on Imagen 3, it offers faster responses and smoother interactions. Plus, you can even embed longer text directly onto images!

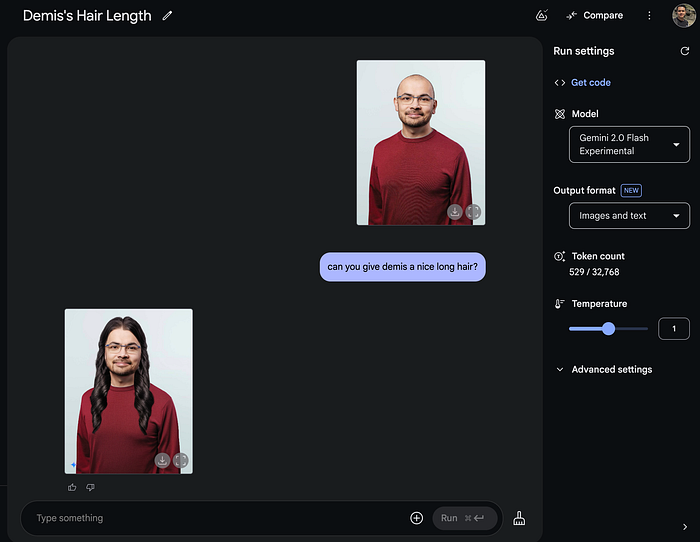

Check out this example where I transformed Google Deepmind’s CEO, Sir Demis Hassabis, into a long hair dude.

Here’s another example showing Gemini adding a chocolate drizzle to plain croissants.

This is mind blowing because no other aspect of the original image was changed except for the added chocolate drizzle — which, by the way, looks incredibly realistic.

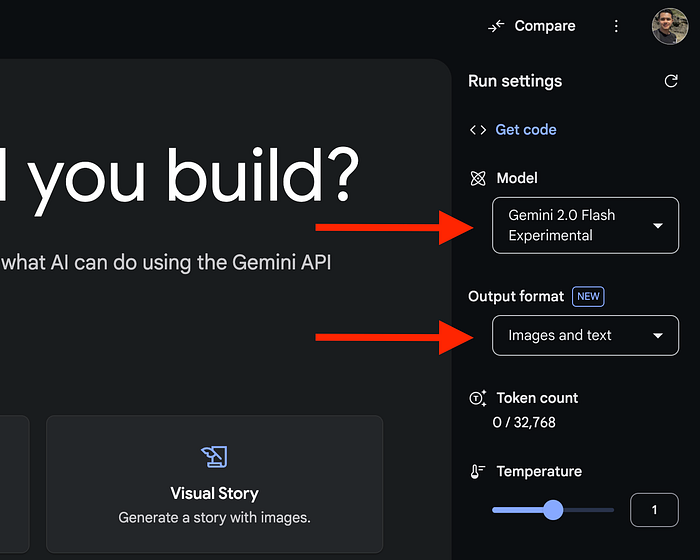

Here’s how it works

To get started, head over to Google’s AI Studio, login with your Google account and set the model to Gemini 2.0 Flash Experimental. Make sure also that the Output format is set to “Images and text”.

Then, upload your image file by clicking on the “+” button at the bottom-right corner of the prompt field. To illustrate, here’s a playful edit I made to an image of a fox. I dressed him in a puffer jacket because he might feel chilly up there in the icy mountains!